TL;DR

You don’t need a complex data protection strategy to reduce risk. By focusing on these 5 core backup best practices, you can address the majority of practical threats. Read more…

Most data protection plans fail for a simple reason: they try to do too much at once.

Companies add more tools, more layers, and more policies, but often overlook the small number of actions that actually make the biggest difference.

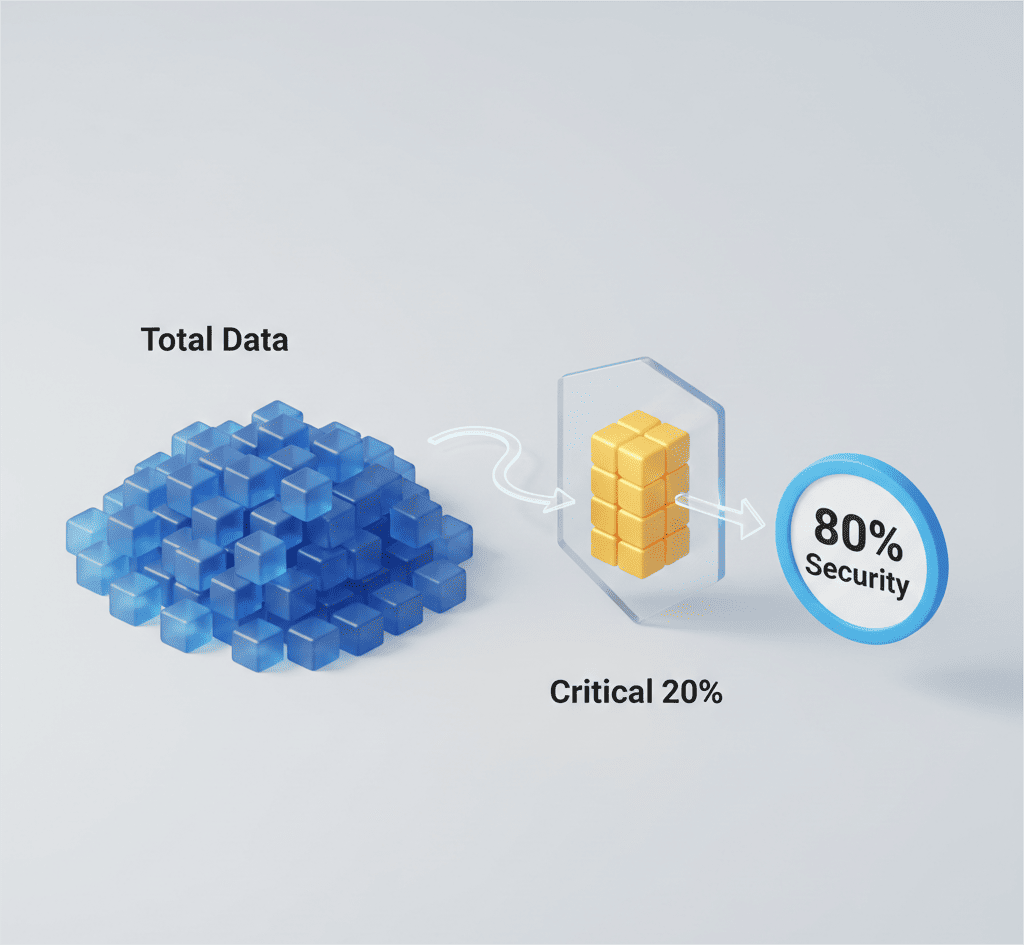

The Pareto Principle, also known as the 80/20 rule, offers a helpful way to think about this. In many systems, a focused set of actions drives most meaningful results. And in this case, data protection is no different.

Security is not a perfect equation. But when it comes to protecting critical data, especially in cloud platforms like Egnyte or Autodesk, a few well-executed backup best practices can significantly reduce risk.

In this blog, we’ll break down five practical steps that provide the greatest impact when backing up your data. We will identify what truly needs protection to ensure recovery outside the primary environment.

Why the 80/20 Rule Applies to Data Protection

Companies are generating more data than ever before. Industry analysts estimate that global data volume continues to grow at double-digit rates year after year. At the same time, ransomware, accidental deletion, and misconfiguration remain leading causes of data loss.

Yet despite increased spending on cybersecurity, many incidents still trace back to missing fundamentals:

- No independent backup

- No tested recovery plan

- No clear ownership of critical data

- No validation process

Security expert Bruce Schneier once said:

“Security is a process, not a product.”

That idea fits perfectly here. A strong data protection strategy is never about stacking tools. It’s about consistently applying the few controls that matter most.

Let’s look at those controls.

Step 1: Identify What Truly Needs Protection

Remember this: Not all data carries the same risk.

One of the most common mistakes in backup planning is treating every file equally. But we all know that critical operational data, financial records, intellectual property, and regulated content carry far more impact if lost.

Start by asking:

- What data would stop operations if unavailable for 24 to 48 hours?

- What data is subject to compliance or legal requirements?

- What data changes daily versus rarely?

This step alone will dramatically strengthen your data protection strategy. When you know what’s important, you can prioritize protection instead of spreading resources too thin.

The goal of a strong data protection strategy is to protect data intentionally, rather than backing up everything without prioritization.

Step 2: Build Backup Schedules Around Business Priorities

Data behaves differently depending on how it’s used, and your backup schedule should reflect that.

Some folders are updated every hour. Others barely change all year. Yet companies apply the same backup schedule across everything because it’s easier to manage.

If fast-changing data is only backed up occasionally, you could lose days of work in a single incident. On the other hand, backing up static data too frequently wastes storage and resources.

A practical data protection strategy starts with one question:

If this data is lost today, how serious is the impact?

If losing it would stop operations, delay projects, or affect customers, it needs frequent backups. If it’s rarely used or easily recreated, the urgency is lower.

In short, your backup frequency should reflect risk and not convenience.

Step 3: Preserve Structure, Permissions, and Versions

A backup is only useful if it can restore the environment exactly as it was, including:

- Folder structure

- Access permissions

- Version history

Without these, restoration becomes a manual rebuild project. Version control and permission integrity are core backup best practices because they protect against both malicious and accidental damage.

When evaluating a backup solution, it’s important to ensure it preserves this operational context. Solutions like Cloudsfer, for example, are designed to maintain permissions and structure during backup and migration processes, helping organizations restore a working environment.

Step 4: Validate Your Backups

A backup isn’t truly complete until you know it can be restored.

Recent industry surveys show a clear gap between confidence and reality. Over 60% of organizations believe they can recover quickly from downtime, yet only about 35% actually meet that expectation when tested. At the same time, roughly one in four companies tests disaster recovery just once a year or less.

The gap between what you believe is covered and what’s actually been validated is real.

Those numbers are concerning because a backup that hasn’t been tested might not work when you need it most. An incomplete test can miss:

- Corrupted data

- Missing dependencies

- Permission issues

- Version mismatches

Strong backup best practices include not only running backups but validating them regularly.

Step 5: Ensure Recovery Outside the Primary Environment

This is the most important step. If your backup resides in the same environment as your production data, your risk exposure remains high.

Account lockouts, ransomware propagation, misconfiguration, and insider threats can affect primary systems and attached backups simultaneously.

True backup means:

- Independent storage location

- Separate administrative access

- Ability to restore without relying on the primary platform

This principle aligns with widely accepted backup best practices. In simple terms, recovery must not depend on what failed.

The Bigger Picture: Simplicity Wins

The global cost of downtime continues to rise. Studies from industry analysts consistently show that even short outages can cost organizations thousands and sometimes hundreds of thousands of dollars per hour.

A focused, practical data protection strategy built on proven backup best practices will reduce the majority of operational risk. Not because 20% literally equals 80%, but because the fundamentals prevent the most common and most damaging failures.

Final Thought

Data protection is about doing the critical things well.

If your organization can clearly identify critical data, back it up appropriately, preserve its integrity, test recovery, and restore independently, you have already addressed the majority of practical risk.

And that’s the real power of the Pareto Principle in data protection.